Think AI hallucinations are bad? Here's why you're wrong

Key Takeaways

- 1AI hallucinations stem from LLMs being rewarded for answering, not for indicating uncertainty.

- 2Unlike deterministic software, LLMs are probabilistic systems designed to offer the most likely response.

- 3Serious real-world consequences, including legal and safety concerns, arise from unchecked AI outputs.

- 4Mitigating hallucinations requires careful data input, specific prompting, and human oversight.

- 5Generative AI models, including the most advanced LLMs, are fundamentally probabilistic, meaning they are optimized to produce the most plausible answer based on their training data, rather than a definitively true one. This design choice, according to TechRadar contributor Steve Phillips, Co-founder, Executive Chair, and Chief Innovation Officer of Zappi, means expecting an LLM to never hallucinate is an unrealistic demand stemming from a misunderstanding of the technology itself. Phillips recounts an instance where an LLM mistakenly attributed issues to his company's "electricity structure systems," conflating it with an unrelated EV charger manufacturer due to a shared name.

FAQFrequently Asked Questions

AI hallucinations are instances where large language models (LLMs) confidently generate incorrect or nonsensical information. These errors are inherent to how LLMs are designed and trained because they are probabilistic systems optimized to produce the most plausible answer based on training data, not necessarily a definitively true one. LLMs are rewarded for providing an answer, even if it's a guess.

It's unrealistic to expect AI models to never hallucinate because they are fundamentally probabilistic, not deterministic. Unlike traditional software that provides precise answers, LLMs are designed to offer the most likely response based on patterns in their training data. Demanding perfect accuracy from a probabilistic system is a misunderstanding of the technology itself.

AI hallucinations can have serious real-world consequences, including legal and safety concerns. For example, a lawsuit alleges that Google's Gemini chatbot contributed to a fatal delusion. Even in less critical applications, confidently wrong answers from AI can lead to disastrous outcomes if not properly checked and validated.

Mitigating AI hallucinations requires a multi-faceted approach, including careful data input, specific prompting techniques, and consistent human oversight. Since LLMs are rewarded for providing answers, even if incorrect, it's crucial to refine models through reinforcement learning that penalizes inaccuracy. Users should also understand the probabilistic nature of AI and not expect perfect accuracy.

Related Articles

More insights on trending topics and technology

AI Startups Stop Retail's Invisible Profit Leaks

Top Tech Startups Drive Today's Innovation

Figma Make: Master Builds with Context & Control

Ace BI Engineering: 30 AI Era Interview Questions

A Developer Cut Claude's Token Use by 75% — With Broken English

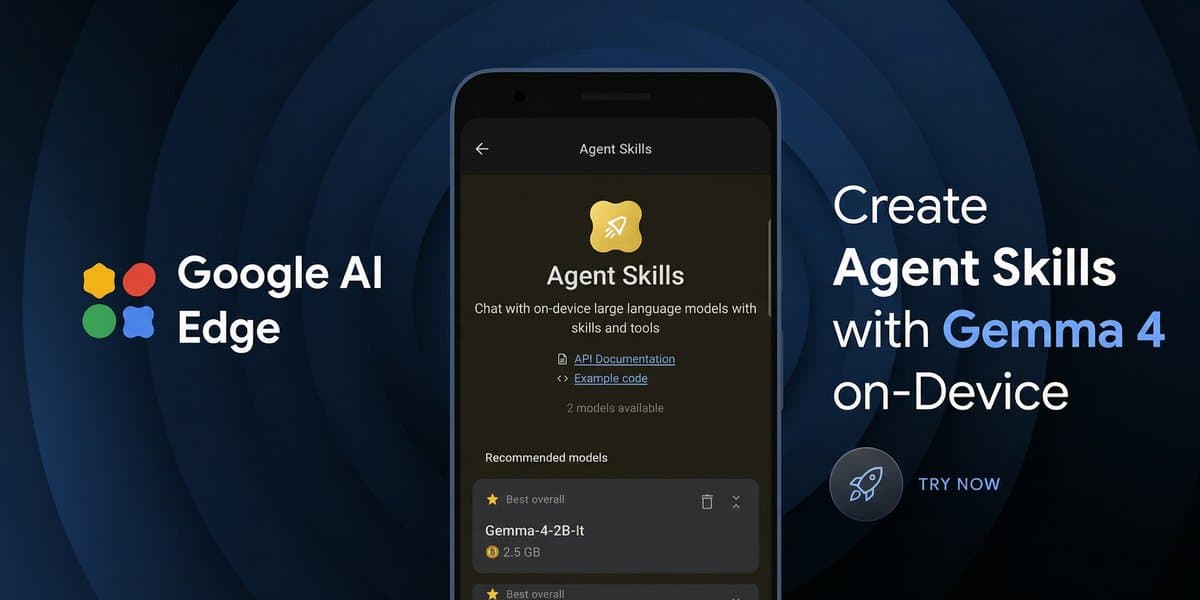

Gemma 4 Powers Agentic AI at the Edge