Alphabet's Google Cloud has solidified its commitment to Intel processors, signing a multi-year agreement to embed Intel Xeon CPUs at the core of its AI infrastructure. This expanded collaboration extends across multiple generations of Xeon chips and includes joint development of custom Infrastructure Processing Units (IPUs), securing Intel's role in powering Google's global data centers for AI workloads and general computing.

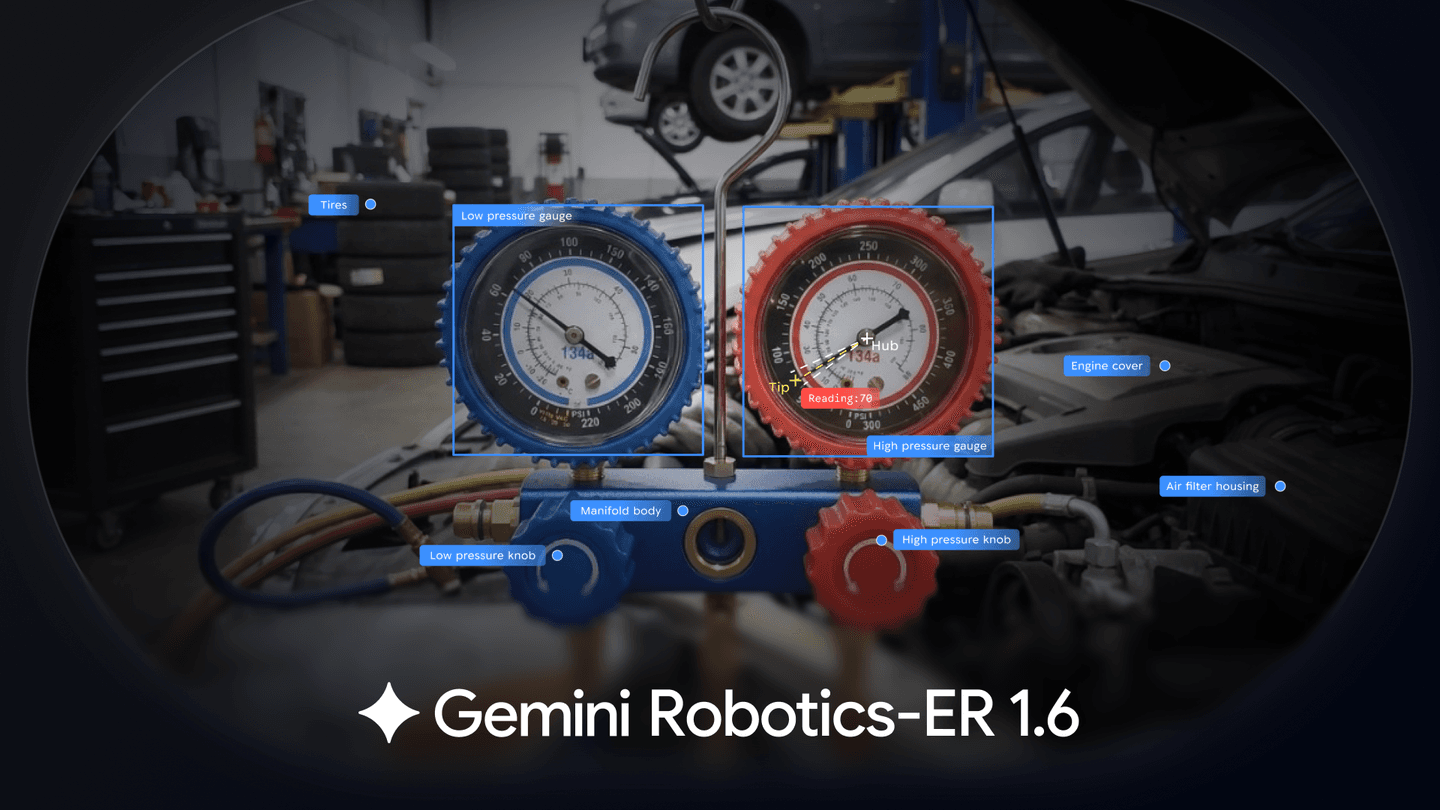

The deal comes as hyperscale cloud providers increasingly adopt custom Arm-based processors for AI tasks. This partnership reinforces Intel's position in a competitive market, specifically addressing the growing demand for CPUs that handle the deployment and inference stages of AI models, which differ from the GPU-intensive training phase.

Intel Xeon Processors Retain Core Cloud Role

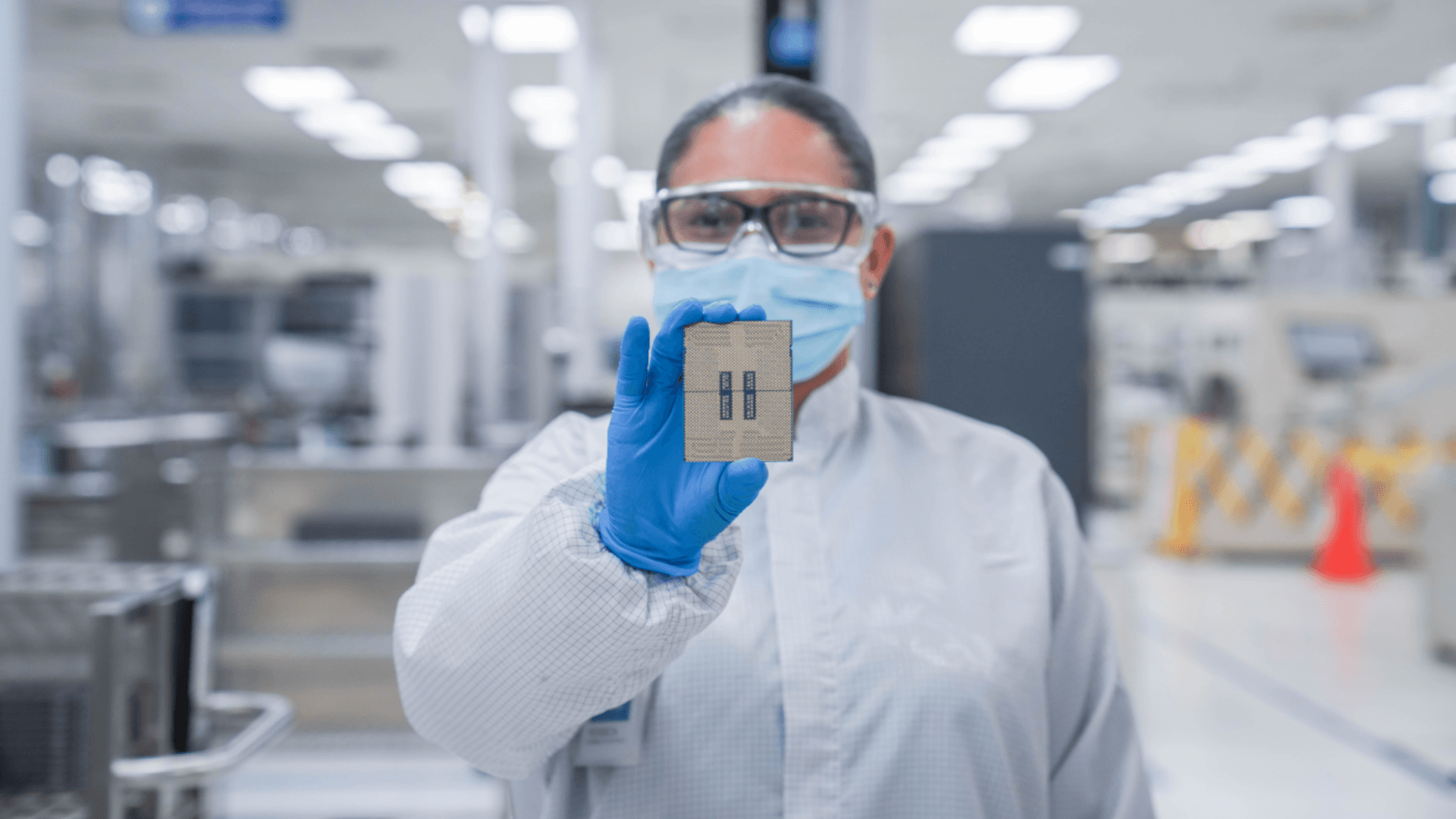

Google Cloud instances like C4 and N4 already utilize Xeon 6 processors. This new multi-year agreement ensures that the pattern continues, with future generations of Xeon chips powering a broad range of workloads including AI inference and general-purpose computing across Google's global infrastructure, according to Intel. This commitment highlights the persistent need for x86 architecture, particularly for backward compatibility and the significant single-thread performance that Xeon CPUs offer.While graphics processing units (GPUs) are essential for training complex AI models, central processing units (CPUs) remain critical for running those models in production. CNBC reports that Intel produces its latest Xeon processor using its advanced 18A process technology.

Custom IPUs Offload Infrastructure Tasks

Beyond CPUs, Intel and Google are expanding their co-development of custom Infrastructure Processing Units (IPUs), a collaboration that began in 2022. These IPUs are specialized, ASIC-based accelerators designed to offload fundamental cloud infrastructure tasks like networking, storage, and security from the main host CPUs.

By moving these functions to dedicated hardware, the IPUs free up Xeon processors to focus entirely on application execution, including AI tools and large language models. This separation improves system efficiency and optimizes resource allocation across large cloud deployments, enabling more predictable performance in hyperscale AI environments. TechRadar notes that 90% of AI servers running custom silicon are projected to rely on Arm's instruction set architecture, making Intel's strategy with IPUs crucial for retaining market share.

Amin Vahdat, SVP and Chief Technologist for AI Infrastructure at Google, emphasized that "CPUs and infrastructure acceleration remain a cornerstone of AI systems—from training orchestration to inference and deployment." This combined approach of robust CPUs and specialized IPUs underpins Google's strategy for scaling AI efficiently.