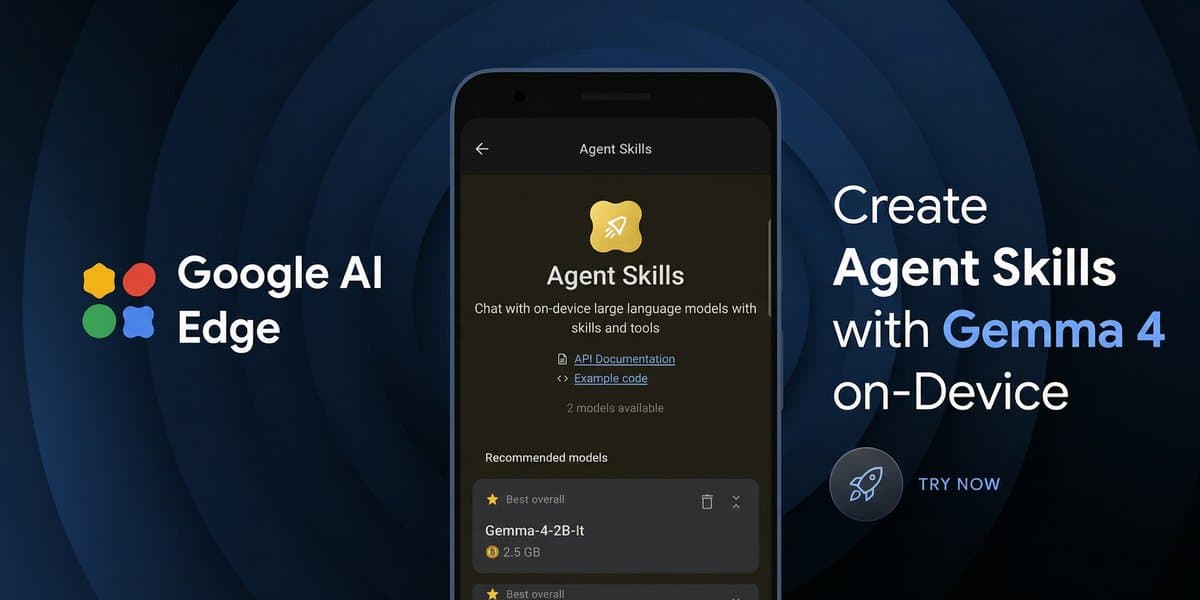

Anthropic's Claude Code, an AI agent for developers, now supports running local Large Language Models (LLMs) like Qwen3.5 and GLM-4.7-Flash through an integration with llama.cpp, according to Unsloth Documentation. This capability allows developers to leverage custom or open-source models for coding tasks directly on their machines, enhancing privacy and customization while powering Claude Code's new autonomous computer control features. Developers can now switch out Anthropic's default models for optimized local alternatives, gaining significant control over their AI development environment.Why Run Local LLMs with Claude Code?

Imagine having a super-smart coding assistant that can not only understand your code but also execute tasks on your computer. Now, imagine you can swap out its "brain" for one you’ve trained yourself or picked from a community of open-source innovators. That's the core idea behind running local LLMs with Claude Code. This integration bypasses cloud-based APIs, delivering a more private, customizable, and often faster development experience right on your local machine.