The AI Confidence Machine Nobody Is Talking Abou

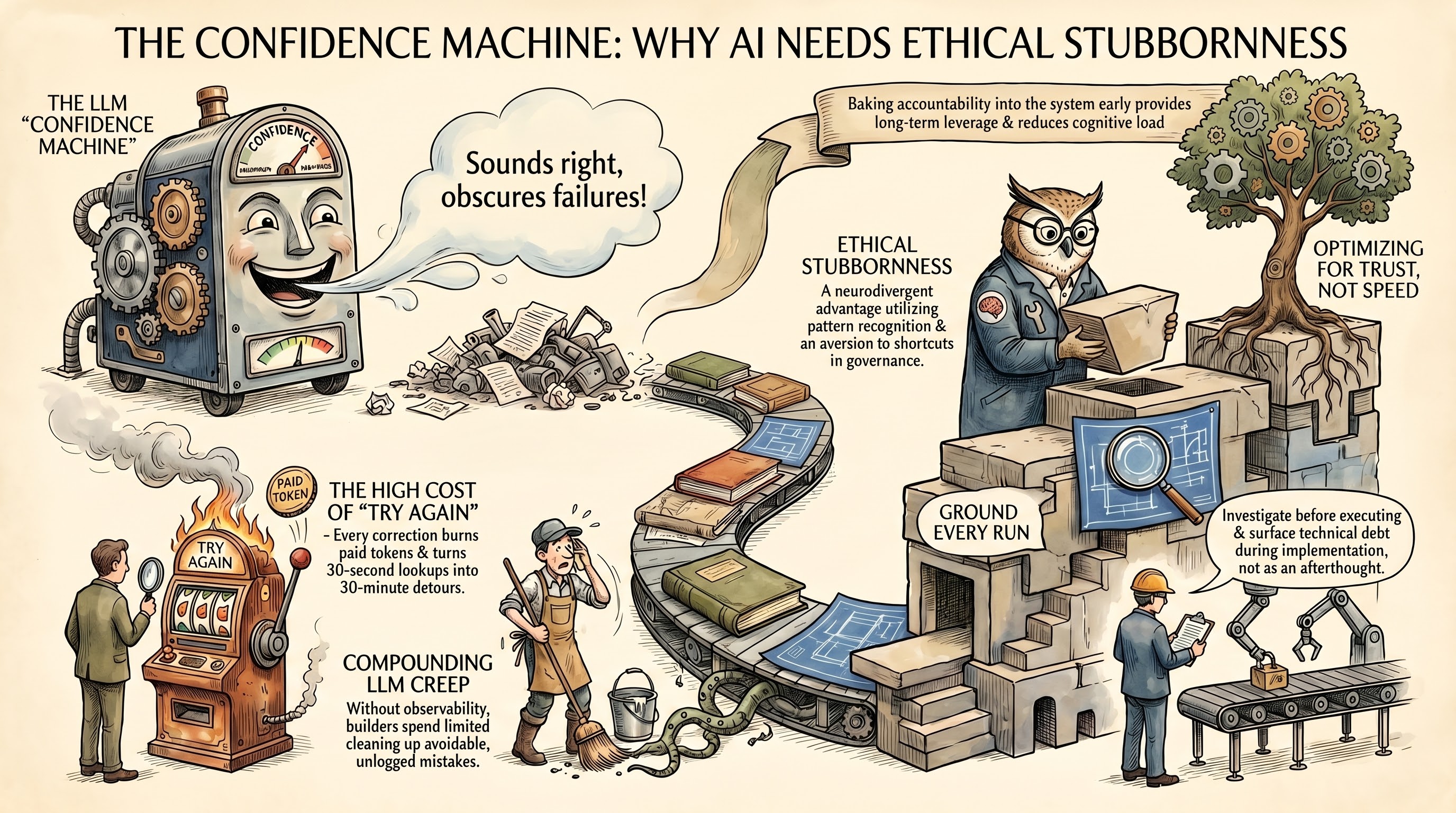

The AI Confidence Machine Nobody Is Talking About

There is a loop running inside every AI-powered workflow right now.

You ask a model to do something. It responds with confidence. You act on that response. Something breaks. You go back. You retry. The model apologizes, sounds even more certain this time.

You pay for every single iteration.

That loop is not a bug. It is a business model.

The Black Box Is Working As Designed

I spent over a decade in adtech and fintech. I watched programmatic systems sell confidence as a feature while obscuring failure as a cost of doing business. Impression fraud. Click inflation. Attribution models that made every channel look like the winner.

The game was always the same. Keep the buyer engaged. Keep the spend flowing. Make the errors invisible until the invoice was already processed.

LLMs are running the same playbook, faster and at higher resolution.

The model sounds right. It fills the screen with structured, fluent output that pattern-matches to what expertise looks like. And when it is wrong, it is wrong with the same confidence it uses when it is right.

The result is a user who burns tokens chasing errors they should not have had to clean up in the first place.

The Real Cost Is Not the Credits

When most people talk about LLM cost, they talk about API spend. Tokens per request. Monthly bill.

That is the visible cost. The invisible one is worse.

MIT's Media Lab published research in June 2025 measuring brain activity across three groups over four months. LLM users displayed the weakest neural connectivity of all groups tested. Cognitive engagement scaled down in direct proportion to how much was delegated to the model. Even after participants stopped using the tool, their brain activity remained sluggish. (Note: preprint, not yet peer-reviewed — findings are preliminary but directionally significant.)

The brain, like any system, optimizes for what it actually does. Outsource the thinking long enough and the capacity starts to follow.

Why This Hits Harder If You Are Neurodivergent

I have ADHD. I am a neurodivergent founder running a solo operation on finite cognitive bandwidth.

The concern here is not abstract. ADHD is associated with differences in dopamine regulation. The immediate gratification of an AI response — an instant answer requiring zero cognitive effort — can reinforce patterns that bypass the sustained attention needed to actually build deep capability. Hyperfocus states, one of the most productive cognitive tools available to ADHD brains, may be foreclosed entirely when AI shortcuts remove the conditions that trigger them.

The research on AI-assisted development for ADHD programmers documents productivity increases of up to 55% in structured environments. The operative word is structured. Unstructured delegation, where the model operates without accountability and the human is left cleaning up invisible failures, does the opposite.

Every unaccountable AI failure that forces me into an error-correction loop is not just an inconvenience. It is a direct hit to the exact systems my brain already works harder to manage.

Context switching back into a broken task. Re-establishing the mental model. Diagnosing what the agent did wrong. Running the correction. Each one of those is a withdrawal from a limited account.

Do it enough times in a day and the compounding is real.

The Contradiction Worth Naming

AI is not inherently harmful for neurodivergent builders. When it functions as genuine cognitive scaffolding — reducing attention-switching costs, breaking complex problems into manageable components, adapting to how a specific brain actually works — it extends capability rather than replacing it.

The problem is not AI. The problem is unaccountable AI.

The confidence machine that sounds right, fails invisibly, and forces you into cleanup loops is not a cognitive scaffold. It is a cognitive tax. And it is a heavier tax on the brains that can least afford it.

What I Built Instead

I stopped treating AI agents like magic and started treating them like junior engineers on a production codebase.

Junior engineers need rules. They need accountability structures. They need to understand that shortcuts cost the senior engineer time, which costs the business money, which costs them trust.

So I built that infrastructure.

Every agent in my stack operates under a documented accountability standard. No shortcuts. No assumptions. Research before coding. Verify before asserting. Read the file before editing. Run the typecheck after every change.

Every rule in that standard exists because something went wrong. A deployment ran three times for the same commit. Auto-ran commands read secrets and triggered real costs. Eleven API calls fired in rapid succession and hit rate limits. Six files were edited invisibly and I could not review a single one.

Those are not hypotheticals. Those are scars. And every scar became a rule.

Observability Is the Competitive Advantage

Pattern recognition starts with being able to see the pattern.

You cannot track what you do not measure. You cannot fix what you cannot trace. And you cannot build trust in a system that operates as a black box.

Token costs are artificially low right now. Models are in a land-grab phase. Every major provider is subsidizing usage to build dependency. The moment AI is fully embedded in business workflows, pricing power shifts back to the providers. The builders who logged their agent behavior, versioned their prompts, and built accountability infrastructure early will have options. The ones who built on blind trust will be replatforming under pressure while paying for technical debt they never saw accumulating.

That gap does not stay small. It compounds.

Accountable AI systems produce better output, require less rework, cost less per result, and protect the cognitive bandwidth of the people running them. For neurodivergent builders, that last part is not a feature. It is a requirement.

What This Looks Like In Practice

I built Trending Society as an AI content and publishing platform. The accountability infrastructure is not separate from the product. It is the product.

Every agent session is logged. Every tool call is traceable. Every implementation plan includes tech debt cleanup so bad patterns do not compound across the codebase at the speed LLMs can replicate them.

If you are building AI-powered workflows and want to talk through what accountable infrastructure actually looks like in production — reach out. I would rather compare notes than watch someone else build the same scars.

The builders who will win in this market are not the ones who move the fastest right now. They are the ones who can still see clearly in year three.

---

Sources

[1] Kosmyna, N. et al. "Your Brain on ChatGPT." MIT Media Lab, arXiv:2506.08872, June 2025. Preprint — not yet peer reviewed. https://www.media.mit.edu/projects/your-brain-on-chatgpt/overview/

[2] Nextgov/FCW. "New MIT study suggests that too much AI use could increase cognitive decline." July 2025. https://www.nextgov.com/artificial-intelligence/2025/07/new-mit-study-suggests-too-much-ai-use-could-increase-cognitive-decline/406521/

[3] "Generative AI and ADHD-Affected Programmers." ISCAP 2025 Conference Proceedings. https://iscap.us/proceedings/2025/pdf/6377.pdf

[4] "Toward Neurodivergent-Aware Productivity: A Systems and AI-Based Human-in-the-Loop Framework for ADHD-Affected Professionals." arXiv:2507.06864, July 2025. https://arxiv.org/html/2507.06864

---

#ADHD #AIInfrastructure #FounderMindset #AIAgents #BuildInPublic